The full-stack founder: building leverage with AI

AI is starting to reshape how work gets done inside small teams.

Founders are expected to operate across strategy, marketing, AI systems, and revenue, often with very small teams and very high expectations.

That pressure is real, and more tools do not necessarily make it easier to decide where to focus or how to move faster.

In our recent webinar, The full-stack founder: building real leverage with AI, we explored how teams are starting to run parts of their go-to-market using simple, practical setups. Together with Jacob Bank, founder of Relay.app, and Rashi Singhania, who leads growth for AI products at Airtable, we walked through concrete examples of how this is already happening.

What you need before the tools

Most founders are running 4 jobs at once. Strategy, marketing, operations, revenue. The team is small. The expectations are not.

AI was supposed to reduce the load. For many founders, it has added a new problem: more output that sounds like everyone else, and a growing list of tools with no coherent system behind them.

The honest answer is that most early-stage founders underinvest in four areas: audience insight, point of view, brand identity, and trust. Each one is a prerequisite. Each one is also where AI creates the most leverage, once it exists.

Audience insight is not a persona document. It is accumulated from live conversations, from sales transcripts, from community signal. Founders who move fast on tools sometimes skip the source. They miss the emotional drivers that make messaging land. Customer interviews, pitch events, and advisor relationships are how you collect the nuance that AI cannot infer on its own.

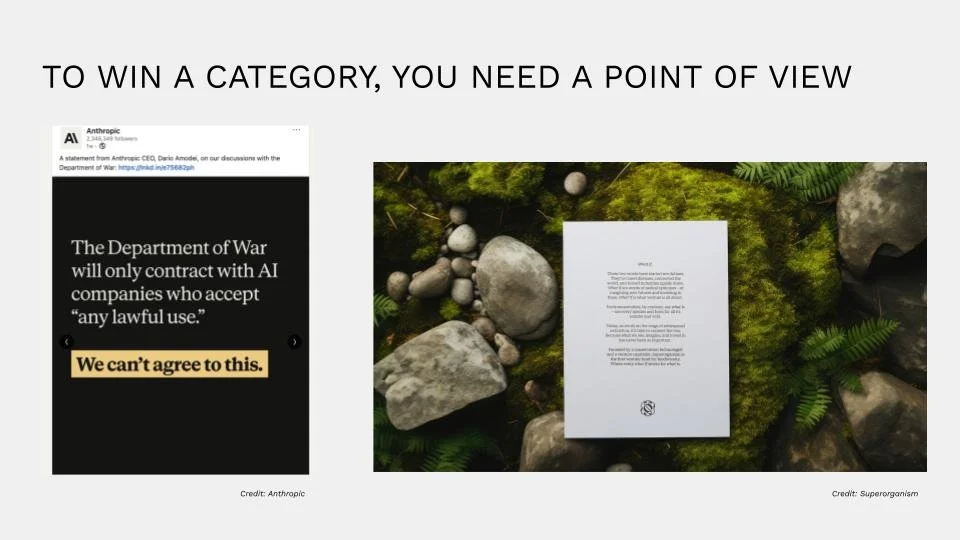

Point of view determines whether your content builds authority or fills a feed. Anthropic is a useful example. OpenAI had early distribution. Anthropic earned category credibility by stating a clear position publicly and consistently. The result was a measurable shift in adoption. Founders do not need to take every position publicly. They do need to document their thesis clearly enough that AI can reflect it.

Brand identity shapes how your company makes people feel, especially as you scale visual and written output with AI tools. Lovable built a product that radiates warmth. That was a deliberate design decision, not an aesthetic accident.

AI tools do not have a point of view. You do. As you scale visual and written output, the work is codifying your taste, your voice, your perspective on the market, and teaching your AI to reflect it. Founders are not prompt writers. They are directors. The absence of that direction does not produce neutral output. It produces drift.

Trust has become a competitive variable. Research consistently shows declining trust in institutions and rising trust in individuals. Founders who document their origin story, collect social proof systematically, and build in public create a credibility layer that compounds. That layer is also something AI can use.

When your agents pull from a repository that includes your voice, your beliefs, and your story, the output reflects a point of view. Without it, the output reflects the average.

The source of truth system

One way to think about the foundation work: you are building a repository that your AI and AI agents will work from. Three buckets matter most.

The first is your style guide. Not a vague document about tone, but a specific set of rules about how you speak, what you avoid, and how past language has performed. The more precise the guide, the more consistent the output.

The second is your messaging frameworks, your positioning hierarchy, your key claims, and increasingly, an answer engine optimization layer. AEO, the evolution of SEO, rewards content that answers specific questions. FAQ structures embedded in blog content now influence how large language models surface your brand in response to relevant queries.

The third is product knowledge and competitive data. What you do, how it compares, and why the difference matters. This is the foundation your agents use to make claims that hold up under scrutiny.

These three buckets, built with enough precision, give your AI something distinctive to build from.

How a 9-person team runs 60 agents

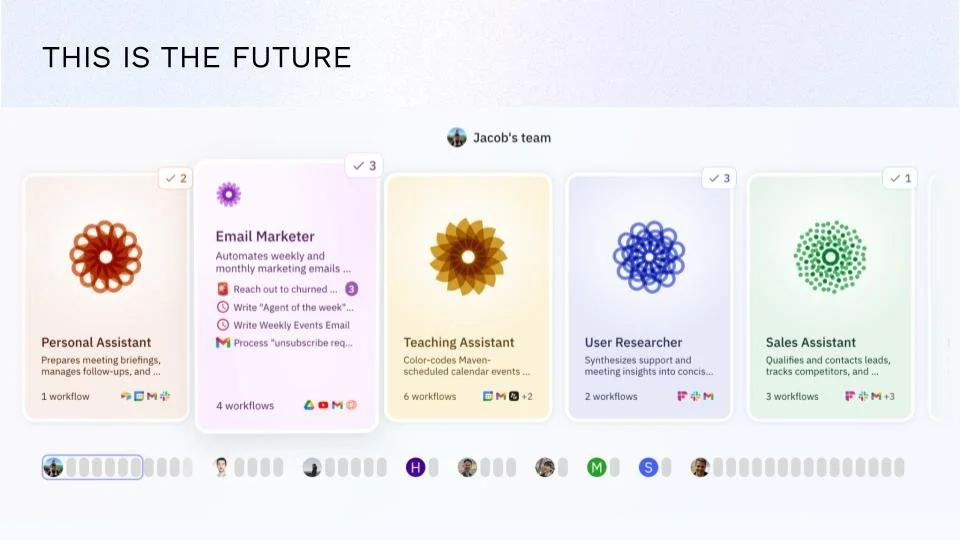

Jacob Bank is the founder and CEO of Relay.app, a platform for building and managing AI agents. He is also a useful case study in what a lean operating system looks like in practice.

His team has nine people. Five engineers, two designers, one product person, and himself. He covers general and administrative functions, go-to-market, finance, legal, HR, recruiting, sales, marketing, support, and customer success. The gap between what a team of nine can do and what those functions require is covered by approximately 60 AI agents.

The agents are structured around job descriptions. Each one has defined responsibilities, operates proactively, and accepts natural language feedback. Jacob described the mental model clearly: treat agents as autonomous workers, not as tools you prompt on demand.

The breakdown across his team reflects where this class of agent creates the most leverage. In go-to-market and general administrative functions, structured, persistent agents run reliably. In research and development, where work is more bespoke and iterative, a different category of agent, like Claude Code or Cursor, fits better. A few of the agents he demonstrated in the session are worth examining in detail.

The personal assistant handles calendar management, meeting briefings, post-meeting follow-up drafts, daily conflict notifications, RSVP follow-ups, cold email filtering, newsletter digests, and task reminders. It also now tracks team birthdays and company anniversaries, added after a long-tenured team member's birthday went unnoticed.

The follow-up drafting workflow is a clean example of how these agents operate. When a meeting transcript is created in Fireflies, the agent first determines whether a follow-up is warranted. Not every meeting requires one. If it does, the agent pulls additional context, drafts an email, and routes it to a Gmail draft for review. The modification process requires no technical language.

The AEO agent tracks how Relay.app appears in responses from large language models. The setup involves a table of ten priority questions: what is the best AI agent builder, what is the best Zapier alternative, which platform is easiest to use. Each month, the agent queries ChatGPT, Perplexity, Gemini, and Claude with those questions, logs which models mention Relay and in what context, and produces a consolidated report.

The email marketer runs every Thursday. It asks Jacob for anything special to highlight that week, with a six-hour window to respond. If there is no reply, it proceeds. It pulls recent videos, events, and previous newsletters, writes a draft, saves it to Google Doc, and notifies the team. Because Loops, their mailing list provider, has no API, the last step requires a different tool.

Three tools, three jobs

Jacob described his daily driver tools as three distinct categories, each suited to a different class of work.

Claude on web handles synchronous thought partnership. Brainstorming, strategic review, analysis. In the newsletter example, Jacob asked Claude to evaluate the newsletter and suggest improvements. Claude had access to Gmail through a connected integration and pulled historical examples before offering recommendations.

Claude Co-Work handles tasks that require access to a local machine. When the newsletter draft exists in Google Doc and needs to be published in Loops, Co-Work reads the draft, proposes a subject line and campaign name, navigates to Loops in the browser, duplicates the most recent campaign, updates the fields, and prepares the campaign for a final review.

Relay.app agents handle everything that should run proactively and automatically in the cloud, whether or not his computer is on. Cloud-based agents and local agents like Co-Work serve different functions. Co-Work is visible, resource-intensive, and best suited for one-off or supplemental tasks. Cloud agents run continuously, operate in the background, and scale across the team.

Jacob offered a practical bridge between the two: use Co-Work to prototype a one-off task, ask it to generate the prompt and structure needed to run that task as a repeatable cloud agent, then migrate the output into Relay.app. The exploration happens locally. The routine runs in the cloud.

Scaling go-to-market at the speed of light

Rashi Singhania leads growth for Airtable's Super Agent and previously led growth at Flipkart, Strivr, and BioRender. She offered three tactical frameworks from her current operating practice.

The first centers on how to stay current in a fast-moving category without spending hours on manual research.

Her setup uses Grok AI, which has native access to X. She built a daily digest task with a defined brief: product context, competitor list, specific signals to track. The task runs every 24 hours and emails her a structured summary. Each item is flagged as must act, worth watching, or low priority. The hottest topics and viral content from the previous day are included.

The brief covers funding announcements, product launches, partnership activity, and platform changes among key competitors. It also surfaces which posts are gaining engagement in the space and which creators or thought leaders are driving conversation. The output feeds directly into content planning. What founders and brand accounts should publish, which conversations to enter, and where the market is moving.

Competitive creative analysis

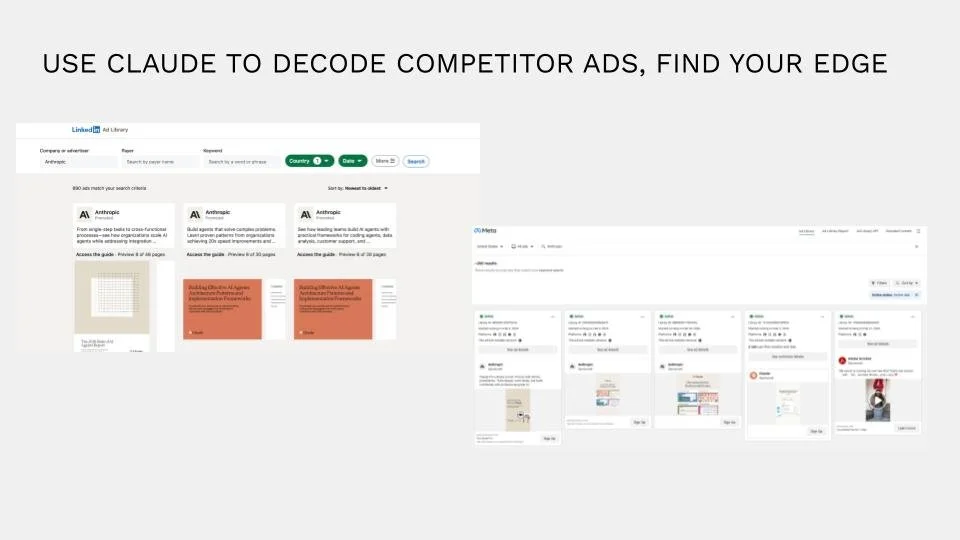

The second tactic involves ads libraries. Every major platform, Meta, Instagram, TikTok, LinkedIn, maintains a public library of active and historical ads filterable by advertiser. Most marketers know this. Rashi's application extends it.

She described feeding screenshots of competitor ads, across multiple platforms, into Claude. The prompt asks Claude to identify patterns, find gaps in their positioning, and surface differentiation opportunities relative to Maison's own creative. When Claude has context about your product, your ICP, and your brand guidelines, it can make those comparisons with specificity.

The same method applies to your own creative history. Comparing past ads against current competitor ads surfaces what you repeat, what you have abandoned, and what you have never tested. The analysis is not a replacement for performance data. It is a faster way to generate hypotheses before spending.

A 48-hour campaign cycle

The third tactic is operational. At Super Agent, a two-person marketing function supports feature launches that can happen any week, sometimes faster.

The old process for a paid media campaign, discovery, ICP definition, messaging framework, channel selection, creative development, budget approval, ran three weeks at minimum. The new process compresses that cycle into 48 hours or less. The architecture is a Claude project with six foundational documents loaded as permanent context: brand guidelines, messaging pillars and tone of voice, founder voice and personal brand, native channel guidelines and ad copy best practices, competitive battle cards, and ICP segmentation.

The process begins when the product lead completes a campaign intake form. The form captures the feature, the intended audience, the differentiation, and what they want to test. That document is uploaded to the Claude project, which reads it against the foundational context, flags any issues before proceeding, and generates a campaign plan.

The flagging step is deliberate. Before generating output, the project reviews the intake form for competitive claims that cannot be verified, generic use cases that need sharpening, metrics that do not add up, and missing fields. The flags surface before any creative is produced.

The campaign plan that emerges is a one-to-two page brief. Who is being targeted. What they will see on each channel. What the messaging themes are. What the team needs to review. The goal is to get to market fast, collect early behavioral data, and iterate.

Rashi named the underlying condition that makes this necessary: in AI, you find product-market fit every three months, if not sooner. The marketing system has to match that cadence.

One last thing

Jacob Bank is the founder and CEO of Relay.app. Try Relay.app and use the code XOOGLER for 500 bonus AI credits atbonus.relay.app.

Rashi Singhania leads growth at Airtable. Try Super Agent free for 2 months at superagent.com using the code XOOGLER.

Veronique Lafargue is the founder of Maison Lafargue. Download the founder story guide, or apply for a messaging clinic.